by gk | January 28, 2022

You probably know that our user-facing product for providing privacy, safety, and security online is Tor Browser. Tor Browser allows millions of people to easily exercise their human right to privacy, within the framework of a familiar web browser. For many years, Tor Browser was the only web browser freely available that provided anything like its level of anti-tracking, anti-fingerprinting, and holistic privacy protections.

In this post, we want to share a little bit of Tor Browser history with you, the origins of our features and designs, and how many of our innovative privacy and security features have been adopted by other browsers.

In the beginning: a fork of Firefox

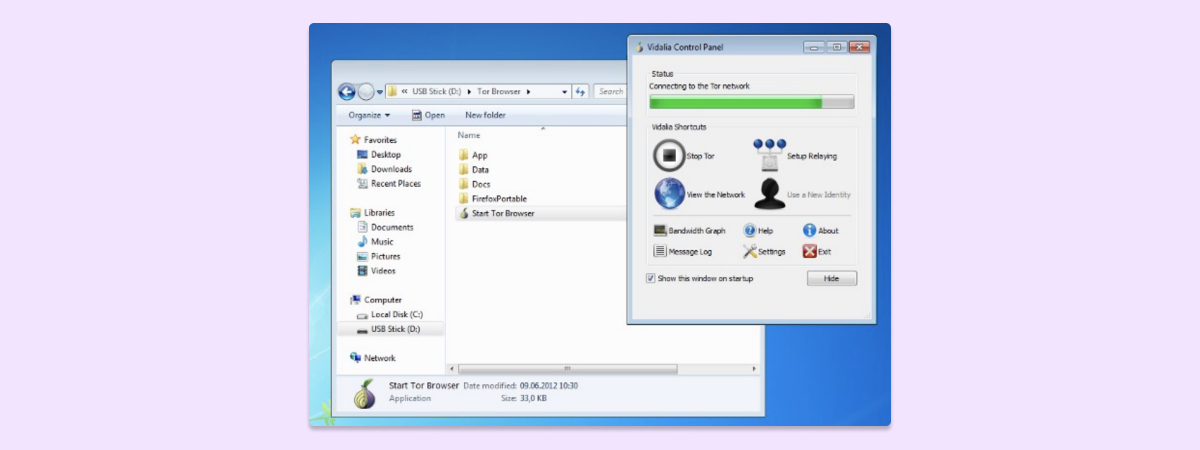

Before Tor Browser existed, you may remember a little app called Vidalia. You could use Vidalia to start a connection to the Tor network, and if you wanted to route your web browsing through Tor, you would need to configure your browser proxy settings to do so.

This solution had many issues–in particular, configuring your proxy settings inside of a browser to use Vidalia was not an easy, user-friendly task. Additionally, while Tor provides good protections at the network level, Vidalia did not offer protections against tracking and privacy invasion that exploit the web browser itself.

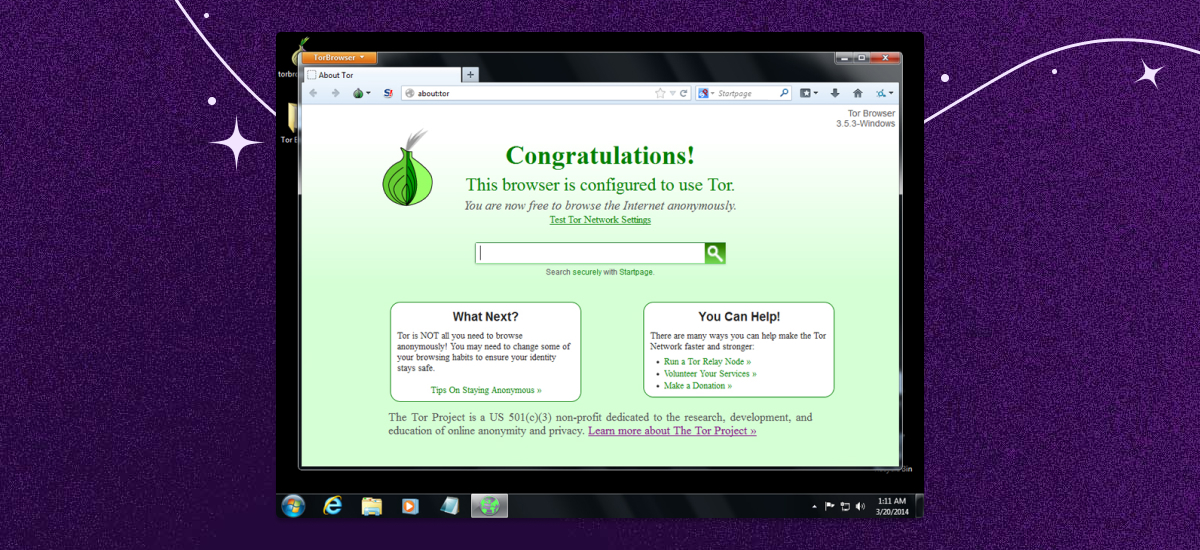

In late 2007, we released the Firefox-based Tor Browser Bundle, which started to address those shortcomings. Tor Browser Bundle set important browser preferences and included Tor, Torbutton (a Firefox extension providing the necessary privacy guarantees), and other components to offer web browsing privacy and protection.

Shipping a browser bundle made browsing the web via Tor much easier, but this package turned out to not be sufficient for user experience and technical reasons.

First, Torbutton allowed users to easily toggle their connection to the Tor network on and off. This toggle concept didn’t exist in other browsers at the time, though, and we found that it contradicted the mental browsing model of most of our users–as a result, it was easy for users to shoot themselves in the foot toggling Tor off and forgetting to turn it back on again.

Additionally, providing the toggle option in a safe way turned out to be a development challenge, as it hit a number of corner cases in the Firefox code that were not particularly high on Mozilla's priority list to fix.

Further, the APIs that were available for Torbutton to work around privacy problems in Mozilla's code turned out to be less and less sufficient for our needs. In early 2011, we therefore started to streamline our browser bundle by both abandoning the Torbutton toggle model and patching Firefox where needed. For example, we added proper SOCKS5 support and fingerprinting resistance/disk avoidance patches to our fork of Firefox.

Project Uplift: bringing Tor Browser protections to Firefox

Privacy online requires all of us. Tor Browser might not be the product that every user online would use, and that’s OK–our mission is about making sure privacy is accessible and show how it can be done. That’s why we started working with Mozilla (and other vendors like Google) early on to get their Private Browsing Modes adapted according to a privacy-by-design approach and to uplift patches as soon as we wrote them.

While that process started bearing fruit with Mozilla during our work on reproducible builds, it got fully up to speed with the Tor Uplift project. From 2016 on, Mozilla dedicated several engineers to help us get our code changes into Firefox and write patches from scratch for some of our missing defenses. This was a multi-year effort which started with our "first-party isolation" work, aimed at defeating cross-site linkability and continued with upstreaming patches against browser fingerprinting.

While both patches against cross-site linkability and fingerprinting landed in Firefox code, they were set to “off” by default, giving us an easy way to activate them for Tor Browser. This helped Tor, and was a step in the right direction, but is further proof that back then, privacy was deemed not important to enable those features by default.

We would like to take the opportunity here to give a big shout out to everyone at Mozilla who made this project possible. Project Uplift does not exist anymore, but the collaboration between Mozilla and the Tor Project has been one of the most significant steps towards making the privacy standards we defend at the Tor Project a standard for the industry.

Privacy going mainstream

Today, we’re witnessing a gradual but monumental shift in a new direction. Other browser developers have taken our lead and started adding privacy and safety features to their products (and sometimes adopted our innovations into their browsers). An industry that once insisted that their business model lived and died on surveillance is now hosting conversations about privacy and making changes to third-party cookies, data collection, and tracking practices–and even allowing more user visibility and control over these options.

Part of this trend has to do with states and nations around the world passing legislation (e.g., GDPR, CCPA) with fines and consequences for companies that do not respect user privacy. After all, money has always been the motivation for change for these companies.

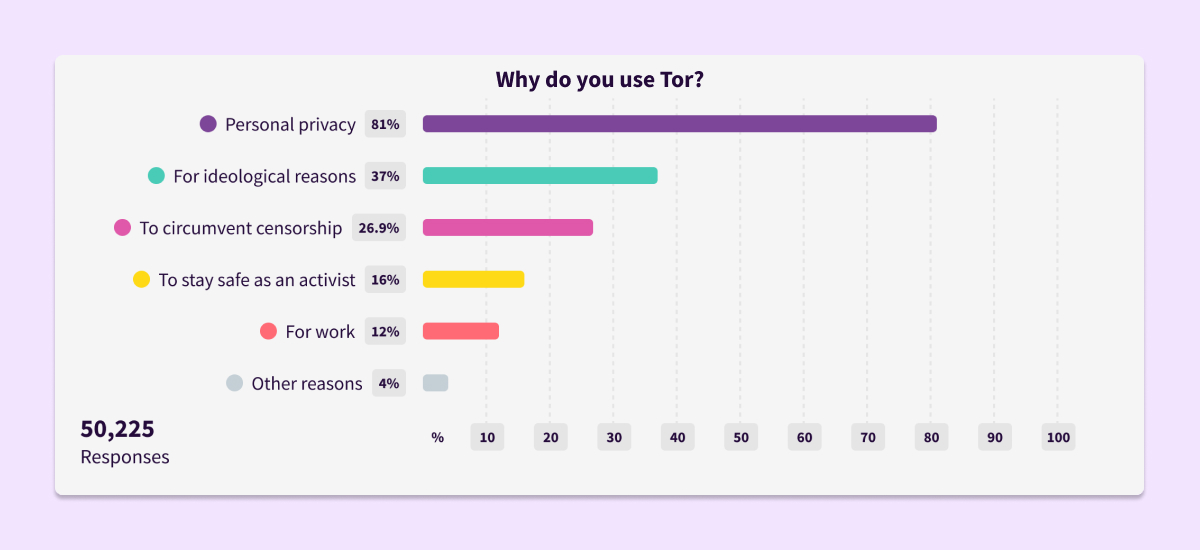

But beyond (or before) legislation comes the fact that people are sick and tired of using products that do not care about their right to privacy–or worse, abuse and sell their data without their consent. User research from many different sources shows that privacy is important to people while using apps or browsing the internet. In a recent survey of more than 50,000 Tor Browser users, 81% indicated they use Tor for personal privacy.

And you can see what’s present in the research in action: people are standing up both to demand privacy and defend encryption. For example, look at the recent mobilization around Apple’s plans to scan iPhones and iPads–and Apple’s decision to pause the rollout of this client-side scanning because of the loud protest by users demanding privacy. The fact that more people are understanding what’s at risk when we lose privacy, and fighting back to demand it, has made real change in the browser landscape as well.

As a result of this growing consumer demand and legislation, our focus on privacy is taking hold in the rest of the industry. Today, we’re seeing that features like Tor Browser’s “first-party isolation” have been adapted and developed further for browsers like Firefox, Safari, and Brave and are enabled by default for millions of users, giving them the tracking protection they deserve.

You can also see our influence in the browser world by looking at the Brave browser, which includes the Private Tab with Tor feature. The Private Tab with Tor brings the privacy protection that the Tor network provides to a private tab experience, with the caveat that Brave is a browser that also focuses on privacy so their browsing experience, as well as the private tab browsing experience, offers privacy protection features that other browsers don’t have.

Privacy has finally become mainstream.

There’s still more work to do

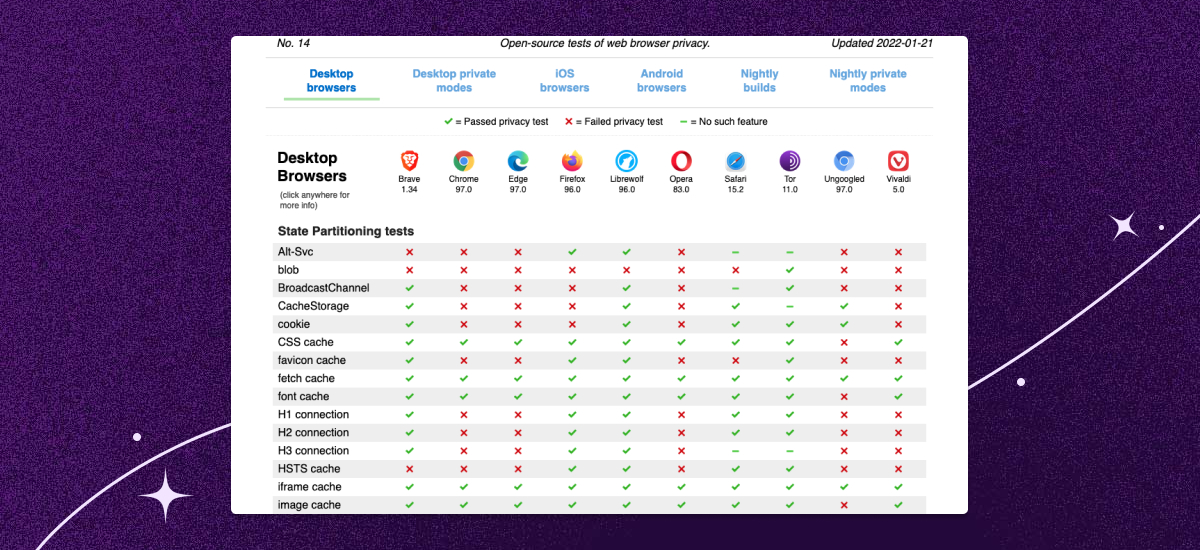

Are we done with Tor Browser, or the work to bring privacy to everyone online, given that all the major browser vendors now take privacy seriously? No doubt, today we are in a much better situation than ever before. But Tor Browser is still carrying dozens of patches around that have yet to be upstreamed to Firefox, and offers one of the most comprehensive private browsing experiences available today.

Plus, the work of protecting our users’ privacy isn’t one-and-done. We still need to keep an eye on changes in the surveillance and advertising markets, and work to neutralize new fingerprinting and tracking vectors, as they develop. This work takes time and money!

One easy way to watch progress in action is to look at the open-source tests of web browser privacy provided by privacytests.org. You can see examples of how other browsers stack up against Tor Browser, and how many tools are coming closer to offering Tor Browser’s level of protection–but that there is still more to be done.

This is a companion discussion topic for the original entry at https://blog.torproject.org/tor-browser-advancing-privacy-innovation/