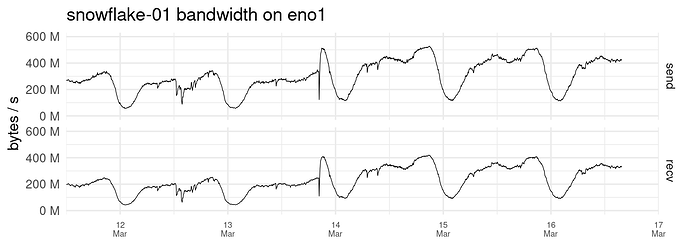

It most likely is snowflake!140 / snowflake#40262. That fix / deployment uncorked a big performance increase that enabled more use of bandwidth. See the snowflake-01 graph at Deploy snowflake-server for QueuePacketConn buffer reuse fix (#40260) (#40262) · Issues · The Tor Project / Anti-censorship / Pluggable Transports / Snowflake · GitLab

The reduced number of connections is probably because the bug that was fixed could cause spurious disconnection errors; see here.